Google has confirmed that its AI-powered tool Big Sleep identified and stopped a critical zero-day SQLite vulnerability (CVE‑2025‑6965) before it could be exploited in the wild.

This is the first time an AI agent has intercepted a live exploit pre-attack - marking a shift from reactive to proactive cybersecurity.

What Google Is Saying

According to Google’s internal team and reporting by The Record, Big Sleep used advanced threat signals to spot the vulnerability, which was already in the hands of threat actors but hadn’t yet been deployed.

“Big Sleep successfully neutralized CVE-2025-6965 before it reached public deployment. It was never live. That’s a milestone.

Google representative via The Record

What That Means (In Human Words)

Most cybersecurity systems work after something bad happens. Big Sleep didn’t wait. It read the signs, connected the dots, and intervened before damage could occur.

Here’s the twist: the bug wasn’t even in a public release yet. That means Big Sleep identified intent to exploit, not just open doors. This kind of foresight hasn’t been done at this level - until now.

Let’s Connect the Dots

🕳️ What Does “Zero-Day Vulnerability” Mean?

A zero-day vulnerability is a security flaw that no one has patched yet - but attackers already know it exists.

It’s called “zero-day” because developers have had zero days to fix it.

If hackers strike before a fix is available, it becomes a zero-day attack - one of the hardest threats to stop.

So here’s the usual timeline:

-

Vulnerability exists

-

Hackers find it

-

Attack happens

-

The company scrambles to patch

-

Everyone updates their software (hopefully)

But that’s not what happened this time.

How Did Big Sleep Catch It Before It Was Publicly Exploited?

Google’s AI didn’t wait for the attack. It read the signs.

Big Sleep used advanced AI pattern recognition and real-time threat intelligence to spot a vulnerability (CVE‑2025‑6965) already being prepared by threat actors.The bug was in development branches of SQLite - not in public release - but still visible to hackers who know where to look.

Here’s how Google likely knew:

-

Threat actors were testing the vulnerability

-

Clues were found in unusual GitHub activity, exploit signals, or dark web chatter

Big Sleep flagged it before it could be weaponised

So yes - this was a true zero-day.

But Big Sleep stopped it before it became an attack.

A rare win in cybersecurity.

The Role of AI in Cyber Protection

Traditional cybersecurity tools work like alarms - they go off after something suspicious happens.

AI is different. It can think ahead.

Big Sleep didn’t just scan for known threats. It connected signals, understood intent, and acted before the exploit reached the public.

That’s the new game:

-

AI hunts for patterns, not just signatures

-

It learns from billions of data points - faster than any human team

-

And it gets smarter every time it spots something new

With tools like Big Sleep, cybersecurity shifts from reaction to prevention.

Instead of waiting for the fire, AI finds the spark.

And in this case?

It snuffed it out before anyone got burned.

AI in Attack vs. AI in Defence

AI isn’t just protecting systems - it’s also being used to break them.

Here’s how the roles differ:

|

AI in Attack 🧨 |

AI in Defence 🛡️ |

|

|

Purpose |

To find weaknesses faster than humans can |

To stop threats before they cause damage |

|

Tactics |

Automates phishing, builds smarter malware |

Detects patterns, predicts exploits |

|

Speed |

Launches attacks at scale and speed |

Reacts instantly and preemptively |

|

Adaptability |

Learns how defences work - then evades |

Learns from attacks - then adapts faster |

|

Real World |

Deepfakes, AI-powered ransomware |

Tools like Big Sleep, Sec-Gemini, FACADE |

Bottom Line

🧯 The Vulnerability

-

CVE‑2025‑6965 is a critical memory corruption flaw in SQLite - not MySQL.

-

It involves an integer overflow or buffer underflow, which could let attackers read or modify memory.

-

The flaw existed in versions prior to 3.50.2.

🛠️ The Fix

-

Google’s AI tool Big Sleep spotted this vulnerability using real-time threat signals and stopped it before it could be exploited in the wild.

-

The issue was patched in SQLite version 3.50.2, released on June 28, 2025.

🔧 How to Update SQLite

If you use SQLite directly (in apps, systems, or embedded tools), you need to update immediately:

# For Ubuntu/Debian systems

sudo apt update && sudo apt install sqlite3 libsqlite3-0

# For macOS (Homebrew)

brew upgrade sqlite

-

If your application bundles SQLite internally, rebuild and redeploy it using version 3.50.2 or later.

-

Most major package managers have already rolled out the patch - check your environment to confirm.

❌ No Action Needed for MySQL

This bug does not affect MySQL.

If you’re running MySQL, you're safe - this is strictly a SQLite issue.

🔐 Final Check

-

✅ If you’re on SQLite 3.50.2 or later - you’re patched.

-

⚠️ Still running older versions? Time to update.

Big Sleep handled the early warning.

Now it’s your turn to lock the door.

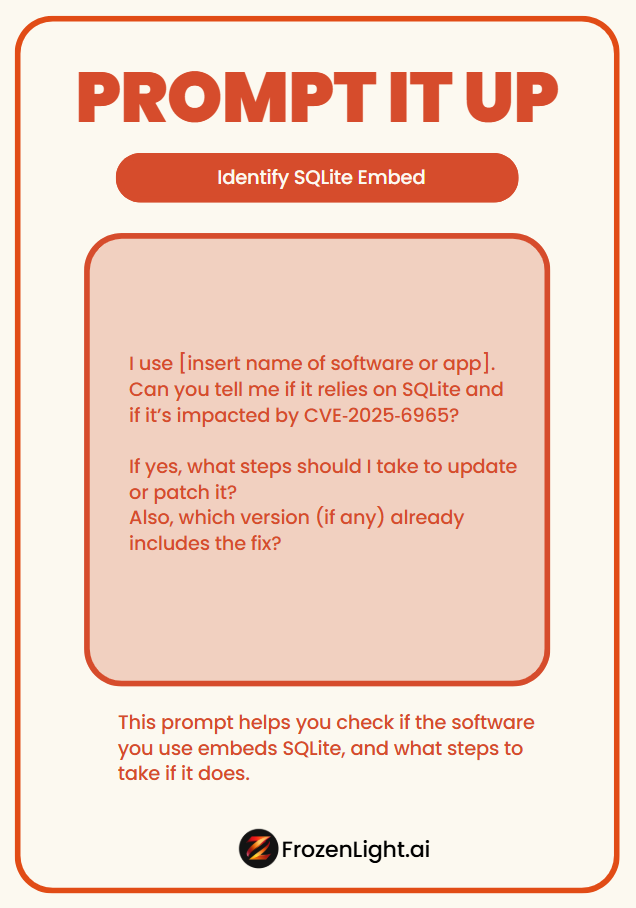

Prompt It Up: Is My Software Impacted by CVE‑2025‑6965?

Not sure if the tools you use are affected by the SQLite zero-day bug?

This prompt helps you check if the software you use embeds SQLite, and what steps to take if it does.

📋 Copy & Paste Prompt

I use [insert name of software or app].

Can you tell me if it relies on SQLite and if it’s impacted by CVE‑2025‑6965?

If yes, what steps should I take to update or patch it?

Also, which version (if any) already includes the fix?

Example

I use Adobe Lightroom.

Does it use SQLite and is it impacted by CVE‑2025‑6965?

What can I do to patch or confirm I'm protected?

This prompt works with ChatGPT, Claude, Gemini, or any trusted LLM.

You don’t need to know your system inside out - just ask, and follow the steps.

Frozen Light Team Perspective

AI works both sides of the fence now.

We need to understand - this is the new reality.

This story isn’t just about Google’s Big Sleep stopping a zero-day (though we’re glad it did - otherwise, this article would’ve been a lot more alarming).

It’s about something bigger:

AI is no longer waiting for breaches. It’s watching behaviour.

And that’s true for everyone - attackers and defenders alike.

Let’s break it down.

When attackers use AI, they’re studying your system to find the weak spot.

When defenders use AI, they’re studying how the system behaves - to catch the signal before the break-in.

It’s cops and thieves - both with AI.

But only one side needs to miss a signal for real damage to happen.

Big Sleep didn’t find a known bug.

It watched how the software was behaving, picked up malicious intent in progress, and shut it down.

From there, the patch race began.

That’s observation, prediction, and response - the real power of well-trained AI.

Because that’s what pattern-based thinking does:

If AI is trained right, it can use the past to predict the future - faster than an attacker can exploit it.

Here’s the bigger point:

In any AI strategy, don’t forget what AI is.

Train it to do its job - or don’t expect it to do the job for you.

This time, it played out in our favour.

Make sure your AI strategy plays out in yours.