What a New Study Reveals About Human Trust, Emotion - and the Limits of Machines

The promise of AI is everywhere. It can diagnose disease, write essays, answer legal questions, and hold a surprisingly fluent conversation. But can it care? More importantly - can it convince us that it cares?

A new study led by Prof. Anat Perry from the Hebrew University of Jerusalem dives into this exact question, and the findings are both fascinating and deeply human.

The Rise of Empathic AI - and Why It Matters

As loneliness surges worldwide (recognized even by governments like the UK, which now has a Minister for Loneliness), the idea of machines that offer emotional support is more than science fiction. AI companions, mental health chatbots, and emotionally intelligent interfaces are being developed as tools to combat social isolation and assist in mental well-being.

But there’s a gap between sounding empathic and being believed.

The Experiment

Perry’s team recruited 1,000 participants and asked them to write about something emotionally difficult - like a family illness or personal loss. Participants were then given a response that appeared to come either from a human or from ChatGPT.

The twist? All the responses were generated by AI.

Despite being thoughtfully crafted and emotionally aware, the response was rated less empathetic when participants thought it came from a machine. Not because of the language - but because of the perceived source.

This finding points to a psychological truth: even the most well-worded, compassionate message loses impact when we believe it’s artificial.

Breaking Down Empathy: Three Core Components

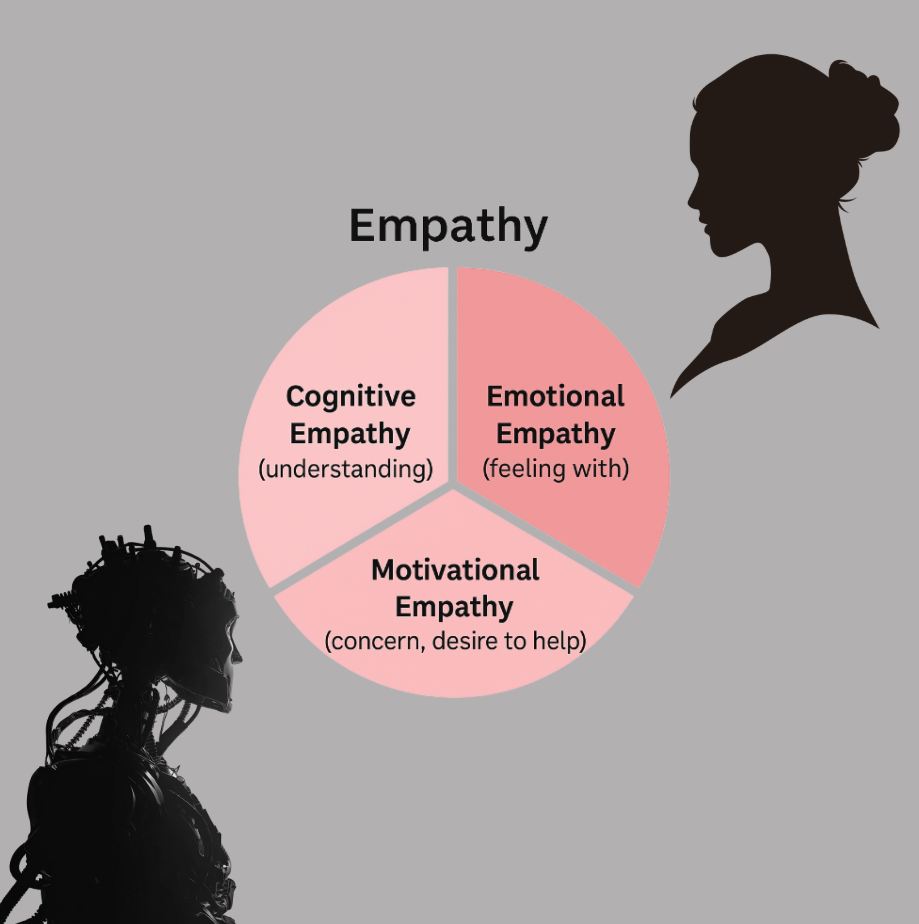

According to Prof. Perry, empathy consists of three elements:

-

Cognitive empathy - understanding what someone else feels. AI can do this well.

-

Emotional empathy - feeling something in response to another’s emotion. AI can simulate this, but we know it doesn’t feel.

-

Motivational empathy – the urge to help. This is where machines hit a wall.

AI can recognize distress and craft the “right” reply. But it doesn’t care-not in the way a human friend, therapist, or even stranger might. And we feel the difference.

Why It Matters

This isn’t just philosophical. As more tools are developed for healthcare, customer service, education, and personal relationships, empathy becomes a currency. If we can’t trust empathy, we’re less likely to engage.

Even users of apps like Cocobot (tested in the study) often gave glowing feedback-until they realized no human was behind the words. Once the illusion broke, many lost interest.

Humans Still Matter

One of the most important takeaways? When it comes to emotional support, people still prefer other people. We value the idea that someone truly sees us, understands us, and chooses to help - not because they’re programmed to, but because they want to.

AI can mimic that behavior - but not the intent. And humans sense that difference.

Even when technology is seamless, our instincts crave something more: authenticity.

Where Do We Go From Here?

Prof. Perry’s research doesn’t argue against using AI for mental health, companionship, or empathy simulation. On the contrary, it shows how powerful these tools can be-and how much more nuanced they need to become.

But it also serves as a reminder: We’re not just emotional beings - we’re relational ones.

In a world increasingly shaped by AI, the most valuable trait may not be intelligence at all - but humanity.

Read the full study: Comparing the Value of Perceived Human versus AI-Generated Empathy by Prof. Anat Perry