Spotify published AI-generated songs on the official artist pages of deceased musicians like Blaze Foley and Guy Clark - without permission from their estates.

The songs were uploaded by a third party using TikTok’s distribution platform SoundOn and credited to a company called Syntax Error.

Spotify’s automated system matched the artist name metadata and slotted the songs directly into the artists’ real profiles - as if they were official releases.

After being called out publicly, Spotify removed the tracks. But the system that allowed it? Still running.

🗣️ What Spotify Is Saying

As of now: Silence.

Spotify has not released any official comment or statement.

🔍 What That Means (In Human Words)

The uploader submitted AI-generated songs using the names of deceased artists.

The songs were distributed through SoundOn.

Spotify’s system matched the artist name metadata to existing official artist pages.

The songs were published under those artists’ profiles.

No manual approval or estate verification was required.

The songs appeared as official releases.

They were removed after being publicly flagged.

🧩 Connecting the Dots - Scam

At first glance, it looks like a glitch.

Someone uploaded an AI-generated song to Spotify under a dead artist’s name.

You might think:

Was the AI any good?

Did it sound like the real artist?

Was this a deepfake moment?

We’ve heard those narratives before (see our story about the AI-generated DJ called "Thy" )

But this isn’t just about how far AI has come.

This is about something bigger.

Let’s see why.

💸 Who gets the money

-

Uploader creates a distributor account

Adds payee details and banking (e.g., SoundOn, DistroKid, TuneCore). -

Uploader sets themselves as the rights holder

They choose splits and ownership in the upload form. -

Distributor delivers the track to Spotify

Metadata includes the performing artist name (e.g., “Blaze Foley”). -

Streams happen on the artist’s page

Spotify tracks plays for that specific track. -

Spotify pays the distributor

Royalties are calculated by Spotify’s payout formula and reported to the distributor. -

Distributor pays the uploader

Payout goes to the uploader’s account, minus the distributor’s fee or cut and any chosen splits. -

Real artist or estate gets nothing by default

They only receive money if they control the release, have a split set up, or file a claim that’s accepted and redirects or withholds royalties.

The Business Model Is the Scam

-

They didn’t touch existing songs.

No one altered or replaced the real artist’s music.

They just added new songs to the official page - quietly, without fanfare. -

They targeted dead or inactive artists.

Why? Because no one’s watching.

No active fans waiting for drops.

No manager checking analytics daily.

No estate running audits every month. -

Each fake song created its own new revenue stream.

Even if a track only got a few thousand plays, that’s money.

It didn't cannibalize the old tracks. It just rode the reputation wave. -

Multiply that across multiple artists.

Upload 10 songs under 5 different dead artists = 50 new revenue paths.

Each with its own streaming potential.

Each with no real oversight. -

The scam doesn’t need to go viral.

It only needs to blend in - and Spotify's system helped it do exactly that.

📉 Bottom Line

-

AI-generated songs were uploaded through TikTok’s SoundOn.

-

The uploader listed dead artists as the performers.

-

Spotify’s system placed the tracks on official artist pages using automated metadata matching.

-

No estate was contacted.

-

The songs appeared as legitimate new releases.

-

Spotify removed the songs only after they were publicly flagged.

-

The system that allowed this is still live.

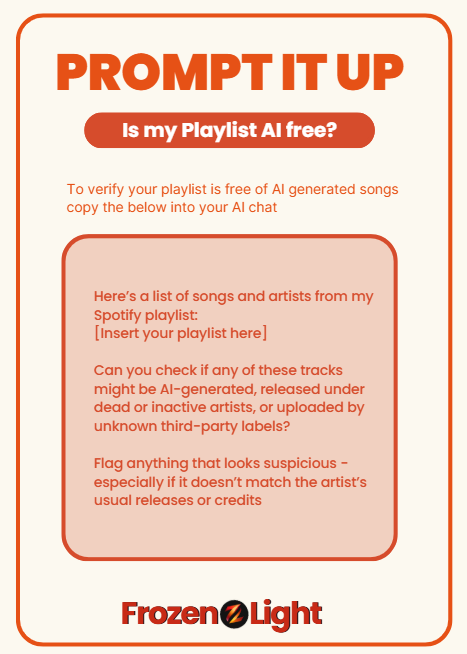

Prompt It Up: Are You Listening to AI-Generated Songs Without Knowing?!

We’re guessing you’re a little curious...

Maybe you’ve already fallen into the trap of streaming AI-generated songs without even realizing it 👀

If you’ve got a Spotify playlist you love - let’s put it to the test.

🧊 Just copy and paste this prompt into any LLM with web access (like ChatGPT Pro or Gemini or whatever:) :

“Here’s a list of songs and artists from my Spotify playlist:

[Insert your playlist here]

Can you check if any of these tracks might be AI-generated, released under dead or inactive artists, or uploaded by unknown third-party labels?

Flag anything that looks suspicious - especially if it doesn’t match the artist’s usual releases or credits.”

Wanna go deeper?

Add this too:

“Let me know if any of these artists are no longer active or have passed away, and whether the release dates seem out of place.”

That’s it. You just turned your AI into a fake song detector.

Because if Spotify’s not checking - someone should.

Frozen Light Team Perspective

This is not news about AI and deepfakes.

This is news about AI working for both sides.

What do we mean?

We’ve seen it in hacking - where bots are used by attackers, but also by defenders.

AI ends up operating on both ends of the system.

And this story feels like that.

Because what we’re looking at here isn’t just another AI-generated song.

It’s a business plan.

A new kind of one.

The kind we wish didn’t exist.

The kind we hope AI would help prevent - not power.

But this is the case.

Someone built a scam.

And AI was dragged into it.

It wasn’t the main character.

It didn’t design the trick.

But it also wasn’t on the side that should have protected us from it.

That’s the issue.

The AI helped make the song.

The platform helped push it live.

And the system that should have stopped it didn’t show up.

We’re not saying the AI was the scammer.

But in this case - it wasn’t working for us either.